AI Agents

Blog posts by category

TL;DR

- Traditional identity governance relies on periodic review cycles, but point-in-time checks detect risks and misconfigurations long after they are introduced. Organizations need to take a new, modern approach to securing identity.

- Current AI-powered identity security systems are not autonomous. They show alerts and generate recommendations but rely on a human trigger before they start taking action.

- Truly autonomous identity security is a fundamental shift, and that’s where Linx Security’s revolutionary new Autopilot AI comes in. Autopilot evaluates access, assesses risk, and either initiates remediation or escalates to a human when oversight is required.

What Are the Limits of Reactive Identity Security?

Reactive identity security and point-in-time checks can’t keep up with the constant change that characterizes modern identity environments, especially at scale. Employees change roles, contractors rotate in and out, and machine identities created to perform a specific task are no longer needed once the task is done.

Periodic review cycles made sense in a world where identity was changing slowly and the blast radius of a compromised account was limited. But today, a single compromised identity can cascade across different cloud environments, SaaS platforms, and CI/CD pipelines in minutes.

The 2024 Midnight Blizzard breach at Microsoft proves this point. During this attack, threat actors compromised a single test tenant account, then moved laterally to high-value assets like cybersecurity team accounts and even executives’ accounts.

The difficult truth? Identity is now the quickest path attackers can take to reach critical systems, and reactive security isn’t enough. (Learn more about why identity breaches are preferred by attackers here.)

How Do Identity Risks Emerge Between Reviews?

Identity risk arises from the slow accumulation of misconfigurations and access changes that happen between governance reviews.

Typically, role drift and privilege accumulation are the most common sources of identity risk in any organization. Even though an access grant for a specific engineer might have been legitimate when it was approved, permissions often persist long after a role change makes them irrelevant.

Access entitlements across multiple systems exacerbate this issue, as a single user might have multiple identities and permissions across different cloud providers, SaaS applications, CI/CD platforms, and other tools.

Risks don’t live in these systems in isolation. Think of a user who has read-only access to a production AWS account but admin access to a CI/CD pipeline that can deploy resources to that account. Human reviewers and review tools that look at systems independently won’t catch this escalation path.

And the problem compounds when time enters the equation. When someone is granted permanent elevated access to address a particular issue instead of JIT admin access, the window between that change and the next governance review becomes especially dangerous.

For example, a developer might get admin access to a production environment to help troubleshoot an outage. Though the incident is resolved within hours, the elevated permissions persist.

If an attacker compromises this account, the blast radius can be significant: They’ll have access to all applications, secrets, and workloads that are running in that production environment. Identity solutions that conduct periodic reviews will eventually catch over-privileged access, but there might be months of exposure in the meantime.

Finally, department restructures happen all the time. In fact, with AI adoption, they’re more frequent than ever. These organizational changes shift the access context entirely. For instance, a team that used to need access to a particular environment may no longer exist in the same form. Despite this shift, their permissions usually stay in place until the next review cycle, resulting in over-privileged access on a team-wide scale.

What Is Reactive Tooling? What Is the Alternative?

Many enterprises believe that they’re keeping pace with risks because they’ve invested heavily in Identity Governance and Administration (IGA) platforms and Privileged Access Management (PAM) solutions. But these tools flag risks long after they’ve been introduced.

Even the newer generation of identity security tools that have AI and machine learning (ML) capabilities still function as analysis engines. They identify issues and give you recommendations on how to solve them, but they don’t act on your behalf.

Without automated provisioning and deprovisioning tied directly to lifecycle events, permissions drift between review cycles with no option to correct them.

The organizations that are effectively slashing identity risks are those embracing AI identity security automation in 2026: continuous, always-on coverage from autonomous AI that can detect, prioritize, and remediate access issues in real time, with minimal human oversight.

Why Should You Move From AI Assistance to Autonomous Execution?

Most of what the market calls today “AI-powered identity security” is actually AI-assisted security. As we’ve seen, these tools detect anomalies and generate recommendations. They might identify that a particular user has more privileges than most of their peers or that a service account hasn’t been used for a long period of time. These insights are useful, but AI-assisted tools leave a critical gap between identifying an issue and remediating it.

Depending on a human for input isn’t always the wrong move. Yet workflows where humans have to analyze and act on every notification from AI tools keep engineers trapped in a cycle of alerts. After all, human bandwidth will never be able to match the pace at which identity risks are growing.

To free engineers up to innovate and turbocharge remediation speed, autonomous systems handle straightforward fixes and repetitive actions. They determine when human input isn’t required by evaluating context. Then, they decide on an appropriate response and execute the corresponding workflow.

By leveraging an autonomous security agent, the entire identity security workflow shifts from “send an alert and a recommendation to a human” to “assess the problem, decide what to do about it, and act accordingly.”

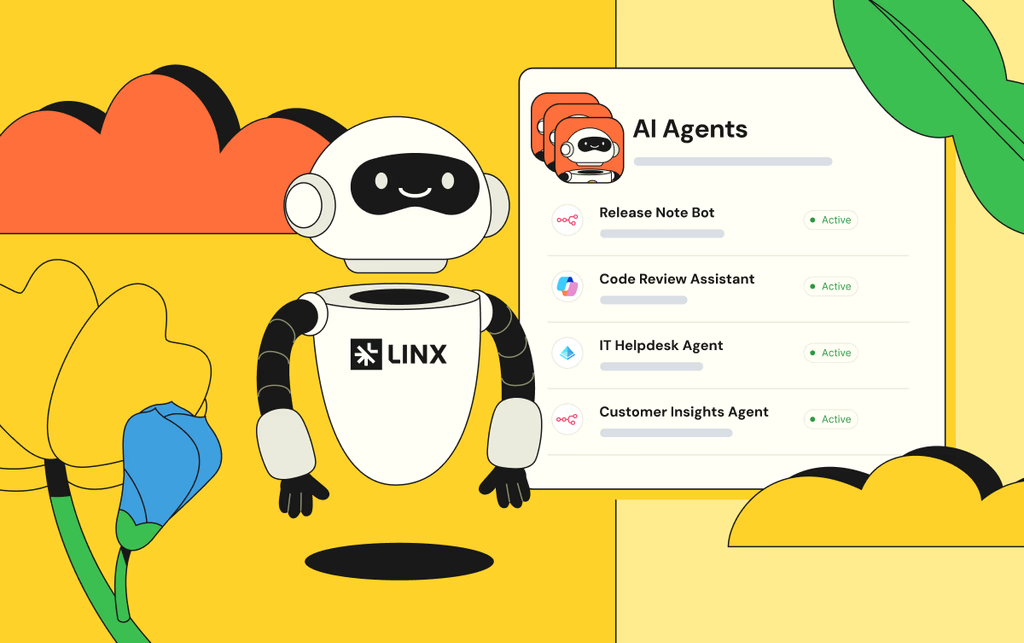

Introducing Autopilot

With Linx Security’s Autopilot, teams can now deploy AI agents that work continuously on their behalf: monitoring their identity environments 24/7, detecting meaningful changes as they happen, evaluating risk in context, and taking action in real time whenever there are issues.

What Does Autopilot Offer?

- Speed and Control: Autopilot evaluates access, assesses risk, and either initiates remediation or escalates to a human when oversight is required, solving the speed-control paradox.

- Governed Autonomy: Autonomy demands trust. Autopilot is designed with that in mind, featuring guardrails and intelligent oversight mechanisms that ensure each autonomous action is carefully controlled.

- Reduced Alert Fatigue: Unlike AI-assisted platforms, Autopilot reduces alert fatigue by looping in humans only when it’s truly necessary.

- Task-Specific Agents: Each Autopilot agent is an expert at a core identity task, such as identification of access drift, profile tuning, and JIT access approvals.

- A Comprehensive Suite of Tools: Autopilot is part of a three-tier AI architecture, alongside AI enhancements that constantly optimize and refine your data and AI Copilot, a personal AI assistant that makes engineers Linx system superusers.

“Security teams don’t need more noise—they need meaningful leverage,” says Niv Goldenberg, Chief Product Officer and Co-Founder at Linx Security. “Autopilot allows organizations to modernize identity security responsibly, combining continuous AI-driven execution with human expertise.”

Conclusion

In a periodic review model, there’s a gap between when identity risks emerge and when governance catches up. Access changes constantly, governance occurs quarterly, and attackers operate within this window.

With autonomous identity security, this gap is closed by autonomous agents that monitor access changes in real time, evaluate them against an organization's in-play policies, and take immediate action to resolve any issues.

Autonomous identity security is where Linx stands apart.

“Autopilot marks the beginning of a new chapter for Linx,” says Israel Duanis, CEO and Co-Founder of Linx Security. “Our vision is to build a security platform that doesn’t just inform teams—it operates alongside them. The future of identity security isn’t more alerts or more manual reviews. It’s intelligent systems that continuously strengthen posture while keeping humans in control. This launch establishes Linx as a leader in autonomous identity security and sets the foundation for where our platform is headed.”

If you want to see Autopilot in action, join us for an in-person demonstration during the RSA Conference (March 23–26). We’ll also be hosting a live virtual demonstration on April 9th at 11 a.m. ET.

To see Autopilot live virtually, register for our upcoming webinar on April 9th: Autopilot: Closing the Identity Risk Gap with Autonomous AI, or schedule a demo to get a personalized demonstration.

For the past two years, I've been building agents that expose data residing in different databases. Here, I'd like to share some actionable insights I've gathered along the way.

At Linx, we had to handle extremely high-scale databases for large enterprises. Building agents that perform well with low latency and high accuracy is hard, and the list of challenges is long.

Which model should you use? Should you fine-tune? How do you consume historical query data, and should you perform active learning? What about orchestration, do you go with an agentic framework or keep it vanilla? How do you respond quickly to investigations running against high-scale databases? And how do you rationalize cross-domain information spanning Business, Security, Governance, and Compliance?

These are just a few of the questions we had to answer.

While all of these topics are important, I'd like to focus on a different angle, one that turned out to be even more crucial.

When building an agent, engineers tend to equip it with tools that allow it to query the data, expose the schema, and assume that the agent will perform well from there. However, that's not the case.

Imagine you're exposing a schema to a junior analyst who's proficient in your database query language. Will they be able to answer questions about the data correctly? In reality, no. In the following sections, I'll explain why not, and how we solved it.

What Differentiates AI from Humans?

Jeremiah Lowin, in his excellent talk, presents criteria for how LLMs differ from humans when consuming data from APIs. Here's my version for the database problem, which is slightly different:

Real-Life Examples of Non-AI-Friendly Cases

Bad Naming: We had a field called is_external, which actually means “is the email domain external to the organization.” It does not mean the user is external, it's a property of the email itself. That naming alone caused repeated mistakes when the AI was asked about external users. The AI assumed the user was a guest, leading to incorrect security audit reports.

Different Lingo: We use a graph database. The relationship between a user and their accounts was represented by an edge named owner_of. But the relationship between a user and their secrets was named responsible_for. When someone asks "Who owns this secret?", the agent tries to generate a query using owner_of, even though we explicitly mentioned what types of edges exist and how they operate. As a result, the query returned no results, even though the data existed.

Design for Performance: We have an accounts collection, where each account represents a human in a specific application. We chose to keep the application name and data in another collection, storing only the app ID in the account document so that one could join them to get the app name if required. This was done to support the use case of app renaming without migrating many documents. In reality, since 98% of queries from accounts required the app name, this caused a huge waste of tokens as the same join query was generated over and over again. (We also found it to be non-performant for the non-agent use case as well.)

Fields That Shouldn't Be Exposed: We had many internal and legacy fields for feature flags, processing states, migration leftovers, and version counters. Things like read_for_processing and migrated—humans learn to ignore them. In some cases, the agent treats them as meaningful and starts weaving them into answers; in others, we're just wasting tokens.

Why Database Schemas Are Built This Way

Your database dialect is set by how your company talks and names things, but customer language doesn't always match your schema's language. The moment users ask questions their way, the gap shows up immediately. While engineering teams optimize for performance, storage, and clean abstractions—all valid priorities—AI agents need something entirely different: clarity and self-explanatory semantics. This disconnect persisted because, until recently, these systems were not customer-facing, and engineers who had to query the data saw no reason to complain.

Why Building a View Is Not Enough

In the MCP presentation, the suggestion is to curate a new API to be consumed by an agent alongside the existing API. One might say, "Let's build a view on top of the existing database and enforce these concepts there." However, I don't think this approach works here, for several reasons:

Views drift. New fields get added to the real model, someone forgets to update the view, and suddenly the CoPilot can't answer questions about new features. For customers, if the product and the AI's "understanding" (the view) diverge by even 5%, the Agent becomes unreliable.

Views might require duplicating the data, which is costly (depending on the type of view).

The concepts here aren't only good for an agent, but for anyone trying to access the database. The same mistakes made by an agent are also made by engineers who build queries and assume they correctly understand the schema.

This doesn't mean we can't create new fields to be consumed solely by the AI, or that there won't be fields the AI should ignore. But the majority of data should be streamlined with a process designed to ensure it is AI-Native. There's nothing more frustrating than finding out a day after a new feature was released that it's not exposed by the CoPilot.

Why Can't We Use a DAL (Data Abstraction Layer)?

A Data Abstraction Layer (DAL) is a software layer that sits between the application and the database, providing a simplified interface that hides the complexity of data storage and retrieval.

A DAL addresses many of the issues raised above. It focuses on outcomes, inner joins are already set, fields that should be ignored are removed, and it's usually explainable and optimized for performance.

However, using a query language is almost like writing code. You can do much more, and DALs are always limited by how they were designed and built. With open-ended queries, the possibilities are as broad as the database creator allows—which is usually what customers expect when asking a CoPilot about their data.

DALs are rigid; AI needs the flexibility of SQL but the safety of a DAL.

How We Solved These Challenges at Linx

At Linx, we weren't just dealing with flat tables; we were managing a massive Identity Graph. This added a layer of complexity where "truth" isn't found in a single row, but in the relationships between disparate domains—merging Business, Security, Governance, and Compliance data into a single coherent view.

We decided to build multiple tools to help our CoPilot answer customer questions. On the database side, we expose everything by default—new fields require a description and should be immediately discoverable by the agent. Alongside this, we also expose built-in APIs to save time for simple cases.

We have different mechanisms for reducing end-to-end latency around RAG and active learning, but I won't go into them in this post, as they target a different angle of how to improve CoPilot performance and reliability and would require another blog.

Engineers can decide to hide fields from the CoPilot explicitly.

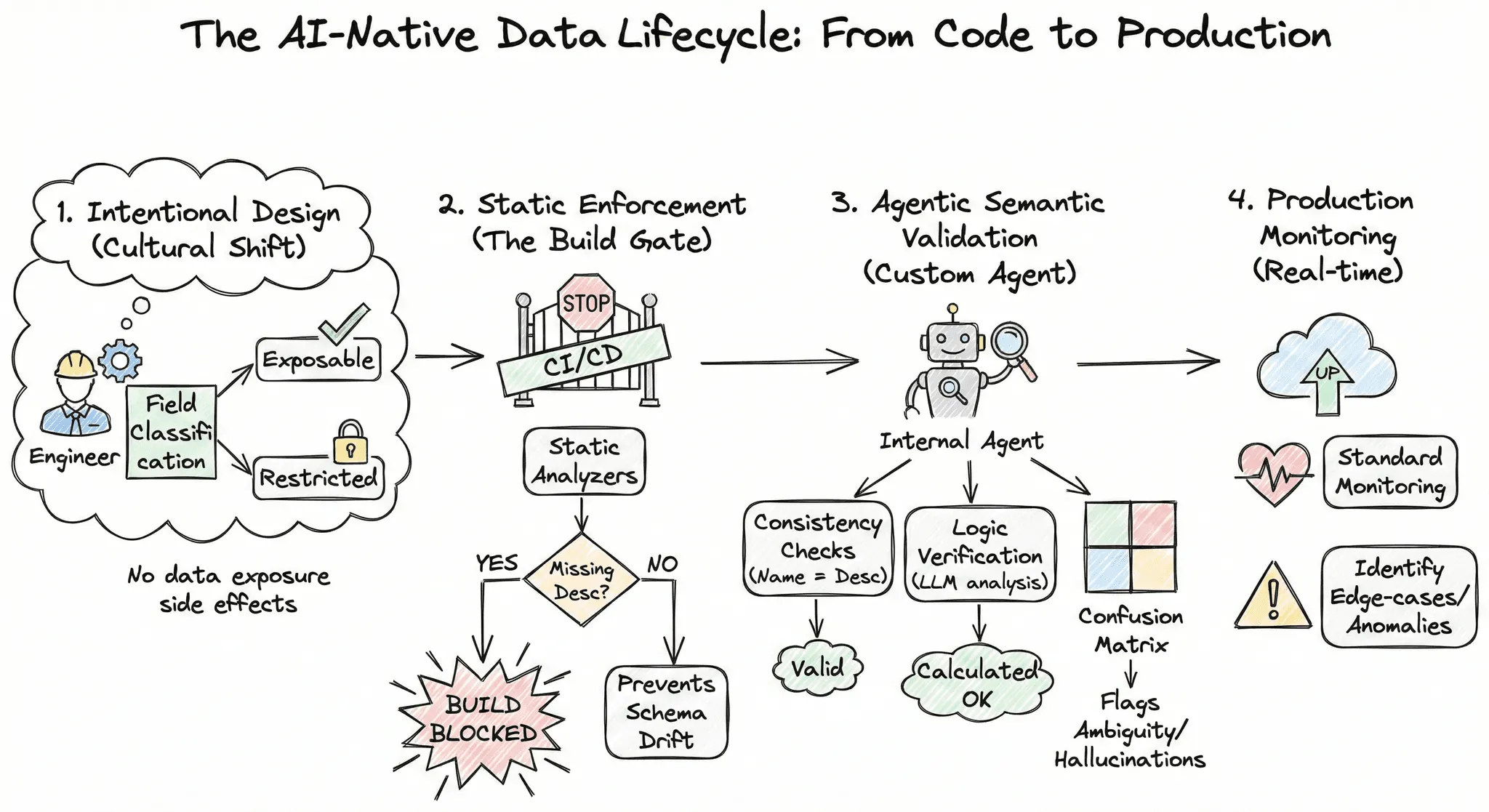

The AI-Native Data Lifecycle: From Code to Production

*Yes I used Gemini to make this ridiculous diagram, it seemed only fitting given the topic. And it gets the job done!

1. Intentional Design: The journey begins with a cultural shift in how our engineers view data. During the initial design phase, engineers must explicitly classify every new field as either exposable or restricted. This ensures that data exposure is never a side effect, but always a conscious decision.

2. Static Enforcement: We utilize static analyzers to enforce our documentation standards: if a field is marked for exposure but lacks a clear description, the build is blocked. This rigid enforcement prevents "schema drift," ensuring that no new data points are silently added or forgotten without a clear contract.

3. Agentic Semantic Validation: We have developed a custom internal agent specifically designed to validate our data integrity. Rather than relying on basic syntax checks, this agent performs deep semantic analysis:

Consistency Checks: Validates that field names align perfectly with their descriptions.

Logic Verification: Analyzes calculated fields to ensure the underlying logic matches what the name implies to an LLM.

Confusion Matrix: Proactively flags near-duplicate fields or ambiguous naming conventions that could cause "hallucinations" or mix-ups during inference.

4. Production Monitoring: Finally, we maintain standard production monitoring to identify and resolve any edge-case issues or anomalies in real time.

Many Databases, Many Truths

Okay, so far so good, right? I wish it were that easy.

As I continued building, I found that this gets harder as systems mature. Real products don't query a single database. You have an operational DB, an analytics store, a warehouse, a lakehouse, documentation, and now APIs via MCP. The same concept ends up in multiple places with slightly different names or shapes. The model has to guess whether account, tenant, and org are the same thing or three different ones. We check for that too: the same entity exposed under different names across different sources, creating ambiguity.

Principles for AI-Native Infrastructure

To sum it up, when building CoPilots that run Text2SQL tasks, we should follow principles that make the CoPilot more reliable (alongside the well-known database metrics we follow, such as performance). Just as we follow SOLID principles when writing code, below is a suggested modified SOLID (or SDDID) for AI-Native infrastructure:

Semantic Naming: Table and field names must be self-explanatory. If is_external refers to an email domain and not a user's status, it must be renamed or aliased for the AI.

Dialect Alignment: The schema should match the mental model of the user. If your customers ask about "Ownership," don't hide that relationship behind technical jargon like responsible_for. Your database dialect must speak the same language as your business.

Documentation: Every exposable field must have a description attribute. This metadata shouldn't live in a separate Wiki; it should be part of the database contract.

Intentional Exposure: Not all data is for AI. Use "AI-Exposability" flags to hide internal flags, version counters, and migration leftovers that confuse the model and waste tokens.

Drift Detection: Implement automated "Semantic Tests" in your CI/CD. If a new field is added without a description or violates naming conventions, the build fails. AI-readiness is a first-class citizen.

Executive TL;DR

With AI agents on the rise, every IAM and security stakeholder must understand that agentic identities aren’t just non-human identities (NHIs) with better automation. They’re ambitious actors who never stop working.

The core shift: risk shows up in the sequence of actions over time, not in any single login, token, or entitlement change.

To govern agents, IAM needs visibility into decision loops and delegation chains, not just credentials.

Old identity assumptions no longer hold

Most IAM programs were built around a clean divide:

- Humans authenticate, receive entitlements, and operate within organizational norms and limits.

- Non-human identities execute narrowly defined workloads with permissions scoped in advance.

Agentic identities fit neither category because they:

- Are persistent decision-makers

- Plan, adapt, and act across multiple steps

- Decide what to do next, which systems to touch, and which tools to use based on goals and context

- Act without waiting for explicit instructions at each turn

That changes what “identity” looks like in practice. Identity does not show up as a single event. It unfolds as behavior, delegation, and a chain of access decisions that change while the agent operates.

Why this breaks traditional IAM

Traditional IAM secures moments in time, such as logins, role assignments, and token issuance. That works when access attempts are made by humans at human speed, or are machine-driven and tightly scoped.

Agents operate between those moments. They decompose goals into steps and discover and request access as they go. One permission unlocks the next. Each request can look reasonable alone, but risk lives in the sequence.

And the security problem often begins after access is granted. With long-running agents, credentials rotate, but intent persists.

What agentic identities are, and what they’re not

Agentic AI systems may comprise a single agent or multiple agents operating in coordination to achieve an outcome. These agents determine paths, tools, and systems to use, and then act on those decisions without continuous human oversight.

That autonomy changes how identity behaves. Unlike traditional NHIs, agentic identities change as agents reason, adapt, and interact with different parts of the enterprise environment.

Agentic identities are

- Goal-driven decision loops that keep operating until an objective is met

- Cross-system actors that express identity through actions, not just a single credential

- Delegation-heavy workflows that often operate through chains of service accounts, roles, tokens, and tools

Agentic identities are not

- Just “smarter bots” running a fixed script

- A single service account or workload identity you can understand in isolation

- Automatically low-risk because credentials are short-lived

How agents consume access in practice

Agents don’t approach a goal by requesting every permission upfront. They break the goal into steps. Each step reveals which tools, permissions, or data they need next, so access is discovered rather than predefined.

Traditional models grant access first and assume that usage comes later. Agents often reverse that flow. They attempt an action, learn what’s missing, and acquire the next layer of access.

Micro-example (how compounding happens):

- Agent starts with read-only analytics access

- It hits a limit and requests config-read access to diagnose the issue

- It determines a change is needed and requests write access in one system

- It delegates the fix to a deployment identity that has broader privileges

Each step can look reasonable alone. The chain is the risk.

Tool connections can create implicit privilege

Many agents do not authenticate to tools using OAuth tied to the current user. Instead, they use long-lived secrets, API keys, tokens, or service credentials configured in the workflow or agent environment.

This can create a mismatch between who is allowed to run the agent and what the agent can do. A user may have limited direct access to an application, but by running an agent that already has a permanent credential configured, they can trigger actions they should not be able to perform.

From the outside, it still looks like normal tool usage. The risk is that the permissions are effectively inherited through configuration, not granted through an approval process tied to the user and the moment.

Why short-lived credentials don’t eliminate identity risk

Short-lived credentials reduce exposure, but they don’t remove accountability gaps.

Agents rely on temporary tokens, delegated credentials, and role sessions. Tokens expire and sessions rotate, but an agent’s intent persists. As one credential ends, another replaces it.

IAM records separate events tied to different identities, but in reality, it is one actor continuing the same reasoning. The question becomes how access combines across identities to reach an outcome. Without that connection, accountability breaks and intent is invisible.

Delegation chains are where context disappears

Delegation is how agents operate at scale, and it’s where visibility erodes fastest.

Agents work through chains of delegation: impersonating service accounts, exchanging API tokens, and federating into workload identities. Each handoff can be valid. Each hop can behave as designed.

The problem is context loss. Most IAM systems record these as separate events. A role assumption here. A token exchange there. What they miss is the sequence that connects them. Without that thread, identity becomes fragmented execution instead of a single decision-maker spanning systems.

Security teams then reconstruct behavior after the fact, inferring intent from logs never meant to explain it.

What this looks like in a real enterprise

Agent permissions in the enterprise can look ordinary until viewed collectively.

Scenario 1: “Fix the performance issue”

An agent detects a performance issue with limited analytics access, assumes a diagnostic role, identifies a configuration problem, and fixes it using a separate deployment identity.

IAM logs each step under a unique identity. None appears risky alone. The real identity is the agent coordinating the entire sequence.

Scenario 2: “Prepare a report”

An agent is asked to prepare a quarterly report. It pulls metrics from a BI tool, then discovers it needs customer fields from a CRM. A developer previously connected the CRM using a long-lived API secret so the agent can “just work.” The agent exports the dataset to a collaboration tool to share with stakeholders.

Each system sees a legitimate action. The risk is the combined outcome: sensitive data moved across systems using credentials that are not tied to the user running the agent, through a chain no single control point evaluated end-to-end.

This is why agentic identities are easy to underestimate. Their behavior only makes sense in the context of a continuous decision loop.

Start here this week

If you do nothing else, start by making agentic identity visible and governable in the places where risk concentrates.

Start here checklist:

- Inventory where agents already operate (workflows, platforms, SaaS copilots, internal tools)

- Identify which identities they execute through (service accounts, roles, tokens, workload identities)

- Inventory agent tool connections and how they authenticate (OAuth vs long-lived secrets)

- Identify run-context mismatches (who can run an agent vs what its configured credentials allow it to do)

- Flag high-impact actions (IAM changes, data exports, production deploys, privilege grants)

- Define guardrails for those actions (allowed tools, allowed targets, approval requirements)

- Require traceability through delegation chains (correlate hops back to the originating agent or workflow)

This is foundational. You cannot govern what you cannot map.

How risk scales in agentic environments

Agentic identities accumulate risk faster because access is discovered, chained, and delegated across systems and identities.

Compounding access creates sideways expansion

Privilege expansion becomes autonomous when agents optimize for task completion unless guardrails restrict decision scope.

This can begin with narrow permissions. As the task develops, those permissions unlock the need for additional access. The expansion is not always a single obvious escalation. It can be accumulation across tools and identities.

Least privilege cannot be treated as a one-time entitlement decision. For agents, it has to be enforced continuously for high-impact actions, and evaluated in the context of the decision loop.

Coordination multiplies visibility gaps

Multi-agent coordination multiplies risk because identity context fractures as tasks are delegated across many agents.

Agents collaborate to complete complex workflows. One analyzes data, another diagnoses infrastructure, and a third applies changes. Each may operate under different identities, tools, or domains.

Everything can look normal to individual systems. What disappears is the thread connecting actions into one decision flow. Fragmented visibility lets risk spread even when no single agent appears over-privileged.

Misuse scenarios that matter

New scenarios show up when influence becomes as important as direct access:

- An agent nudges another agent to execute a privileged action, and each individual step appears legitimate.

- An agent splits actions across systems so no single log stream looks suspicious, but the combined outcome is harmful.

These are identity problems because they depend on delegation paths, chains of authorization, and missing end-to-end context.

Why audits and investigations struggle

Audits struggle because execution is easier to record than reasoning.

Logs capture events. They rarely capture why decisions were made or how actions connect across systems. Traditional IAM trails flatten behavior into isolated entries, forcing teams to infer intent after the fact.

In practice, the hardest investigations involve tool connections. If an agent used a stored secret, the audit trail often shows the tool identity, not the human who triggered the run, and not the full chain of connected systems. This is why correlation across the full flow matters, not just collecting more logs.

In an agentic world, accountability depends on connecting:

- the originating objective

- the chain of identities used

- the sequence of actions

- the final outcome

Without that, you can have “complete” logs and still have incomplete answers.

How to explain agentic identity internally

Agentic identity is hard to govern if teams can’t describe it consistently.

One-minute explanation for executives

Agents behave like autonomous digital workers. They can be fast and independent, but risk comes from decision speed multiplied by visibility gaps. IAM must connect intent and execution so you can understand what the agent was trying to do, what identities it used, and what it changed.

Explanation for architects and engineers

Agentic identity works in a loop:

- An agent forms intent

- It discovers missing access

- It executes through an identity (often an NHI)

- It produces an outcome

- The loop repeats until the objective is met

Governance has to follow that loop. Visibility, boundaries, and traceability need to apply across steps, not just at login.

Misconceptions to stop repeating

- Short-lived credentials don’t automatically mean low risk when intent persists.

- Agents aren’t just smarter bots. They’re decision-makers operating across systems.

- When behavior drives access, risk shows up in the chain, not a single event.

- Agents are not just NHIs.

What this means for your IAM program

The rise of agentic identity necessitates a new approach.

- Treat decision sequences as first-class security objects, not just entitlements.

- Correlate actions across tokens, roles, and NHIs back to the originating agent or workflow.

- Define guardrails for what decisions agents can make as context changes, especially for high-impact actions.

This allows autonomy and delegation to be treated as identity behaviors that require supervision and traceability.

Conclusion

Agentic identities change the shape of identity risk. The biggest shift is not that agents authenticate differently. It is that they operate continuously, discover access as they go, and act through delegation chains that fragment context.

If IAM continues to govern only logins, roles, and tokens in isolation, it will miss the behavior that matters. The path to control starts with visibility into where agents run, what identities they use, and how multi-step decisions connect to outcomes.

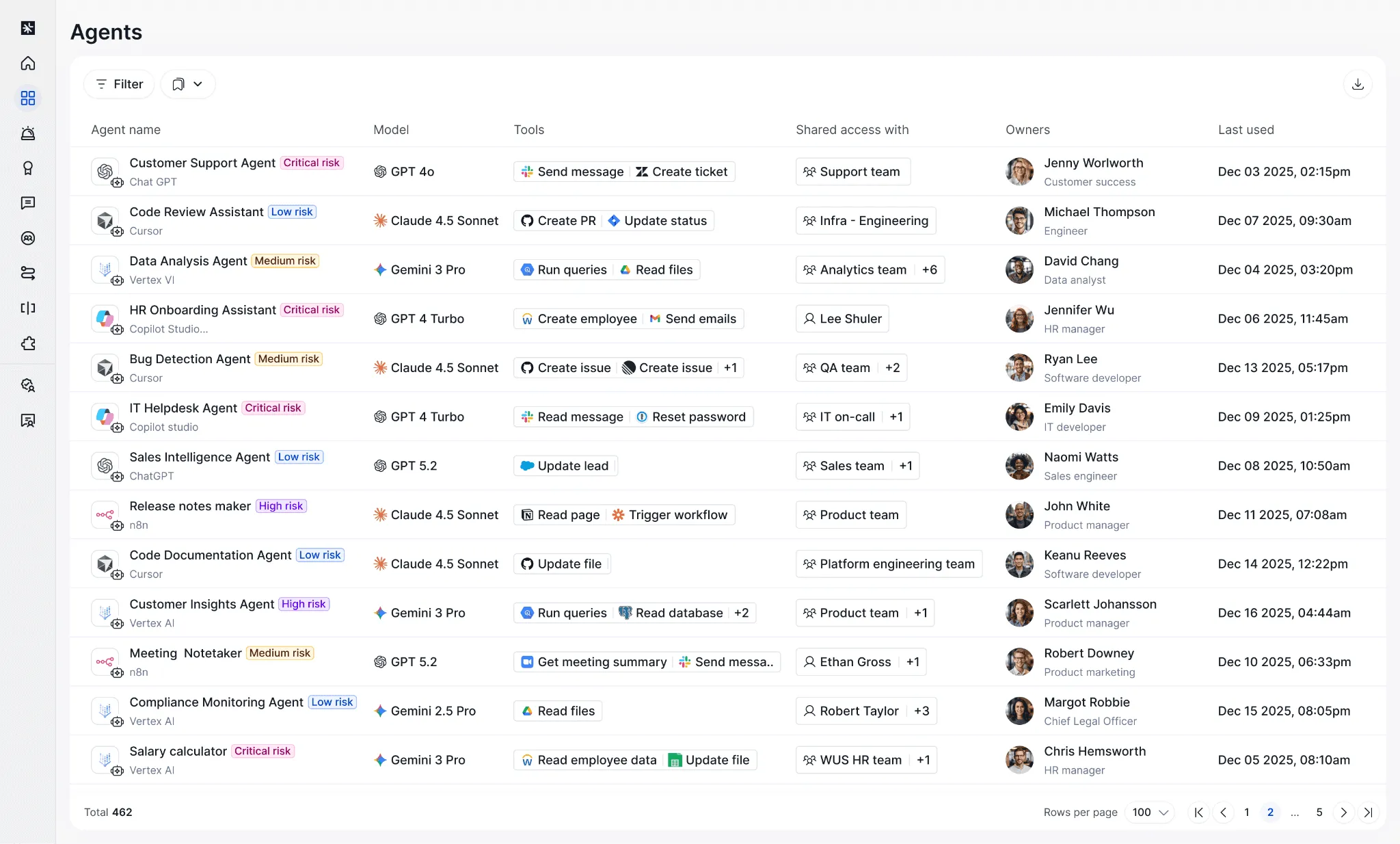

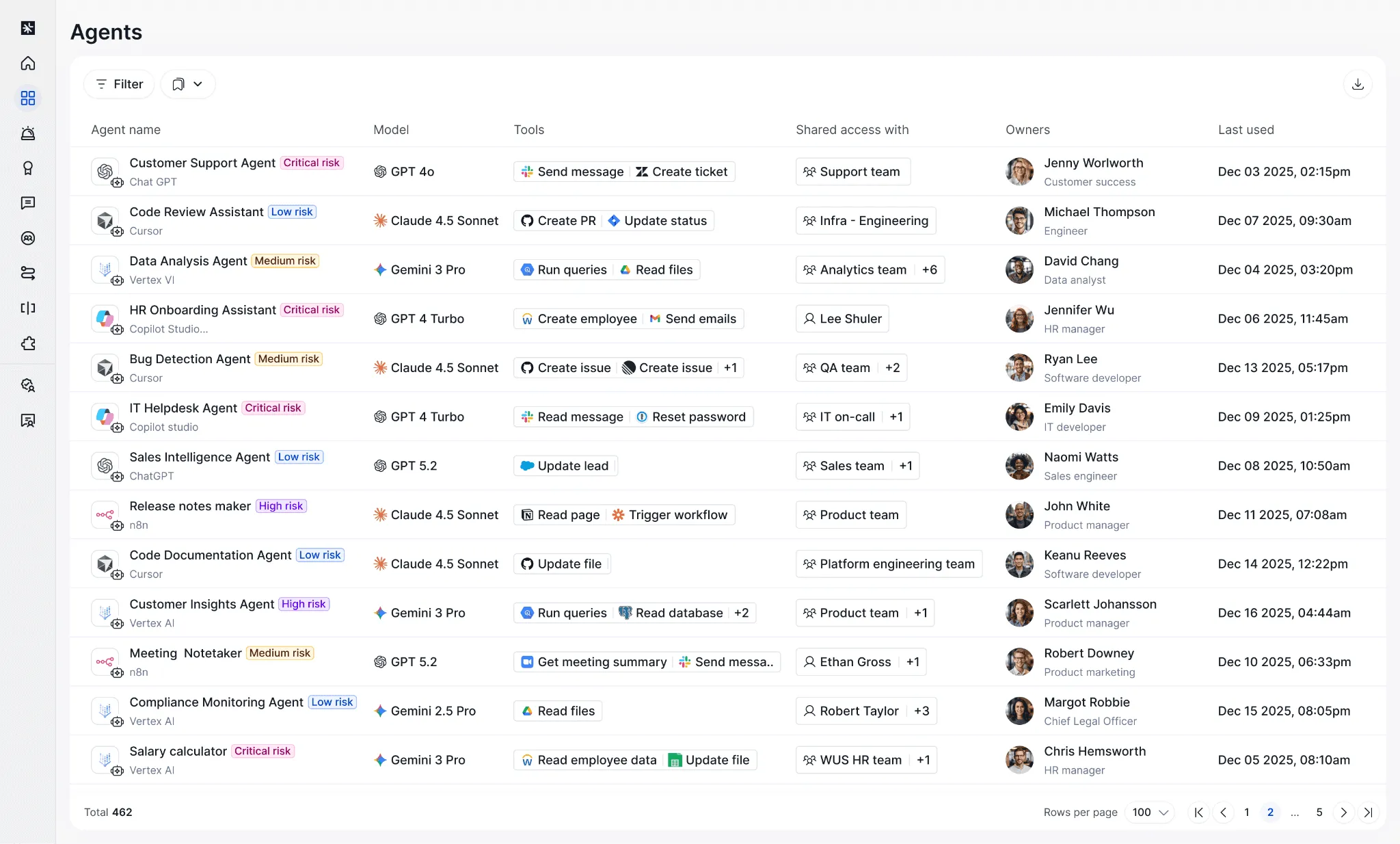

Agent discovery with Linx: visibility with context

Linx provides Agentic Identity Discovery and Governance to close that gap, and give teams a single platform to security and govern human, non-human, and agentic identities.

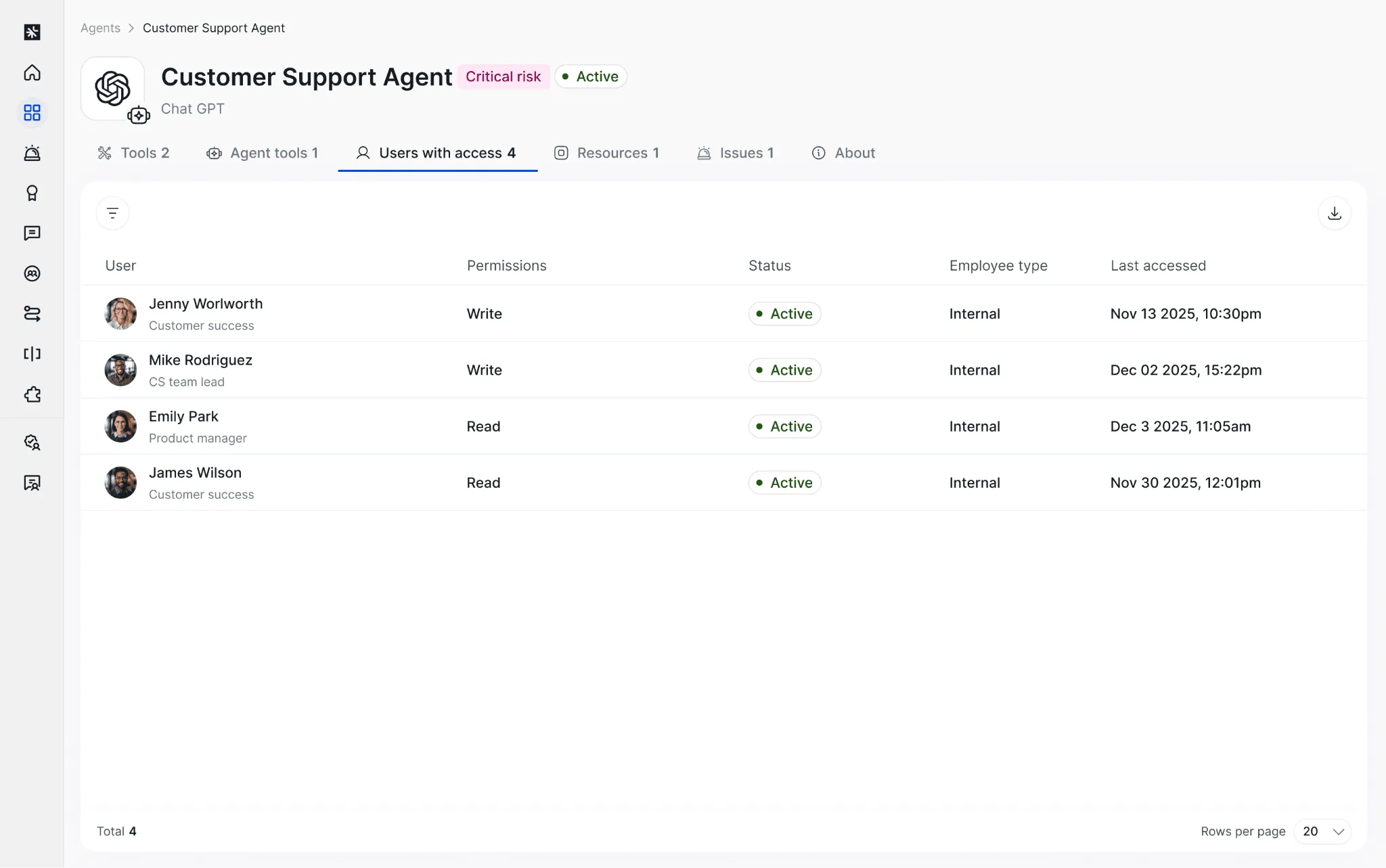

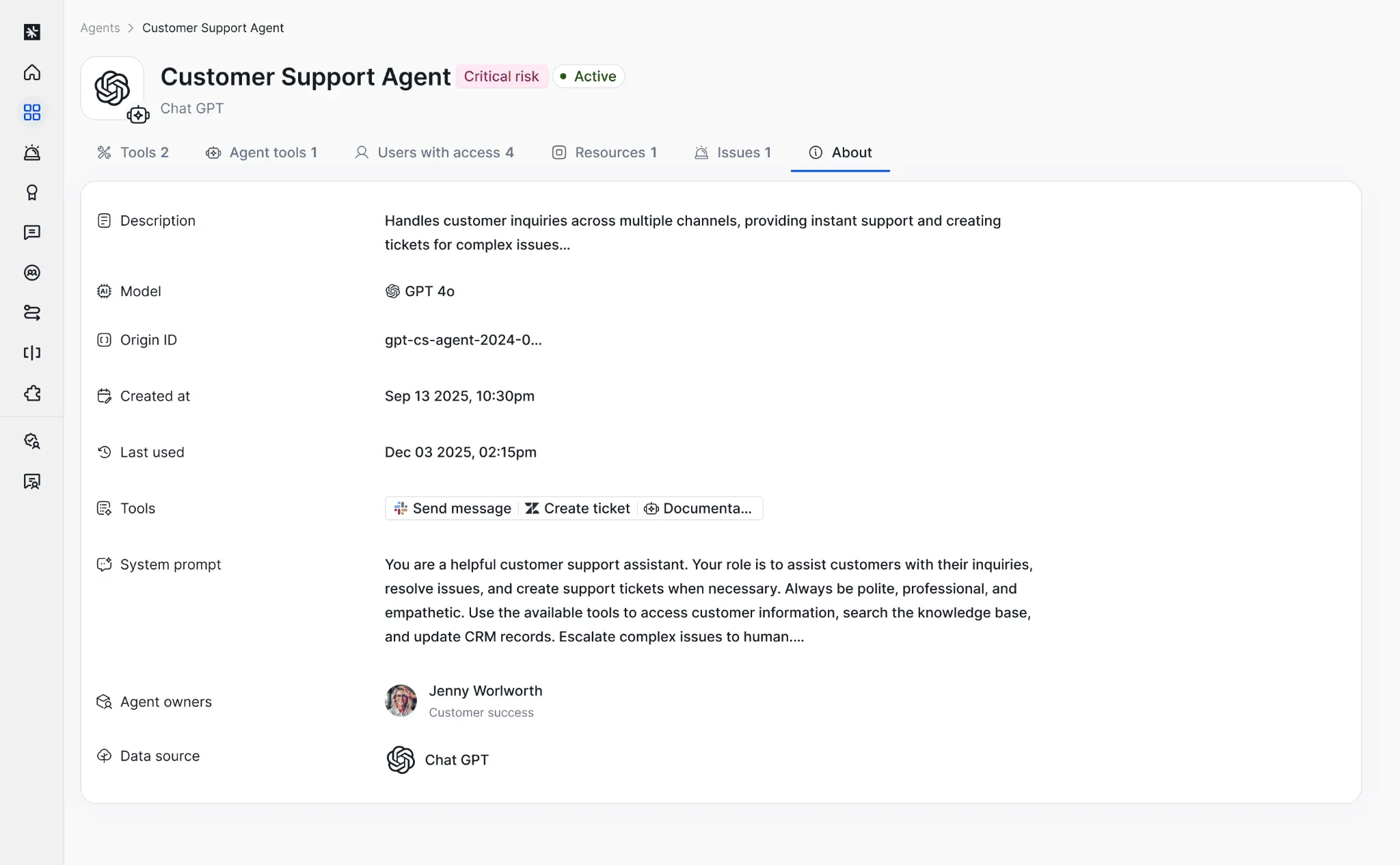

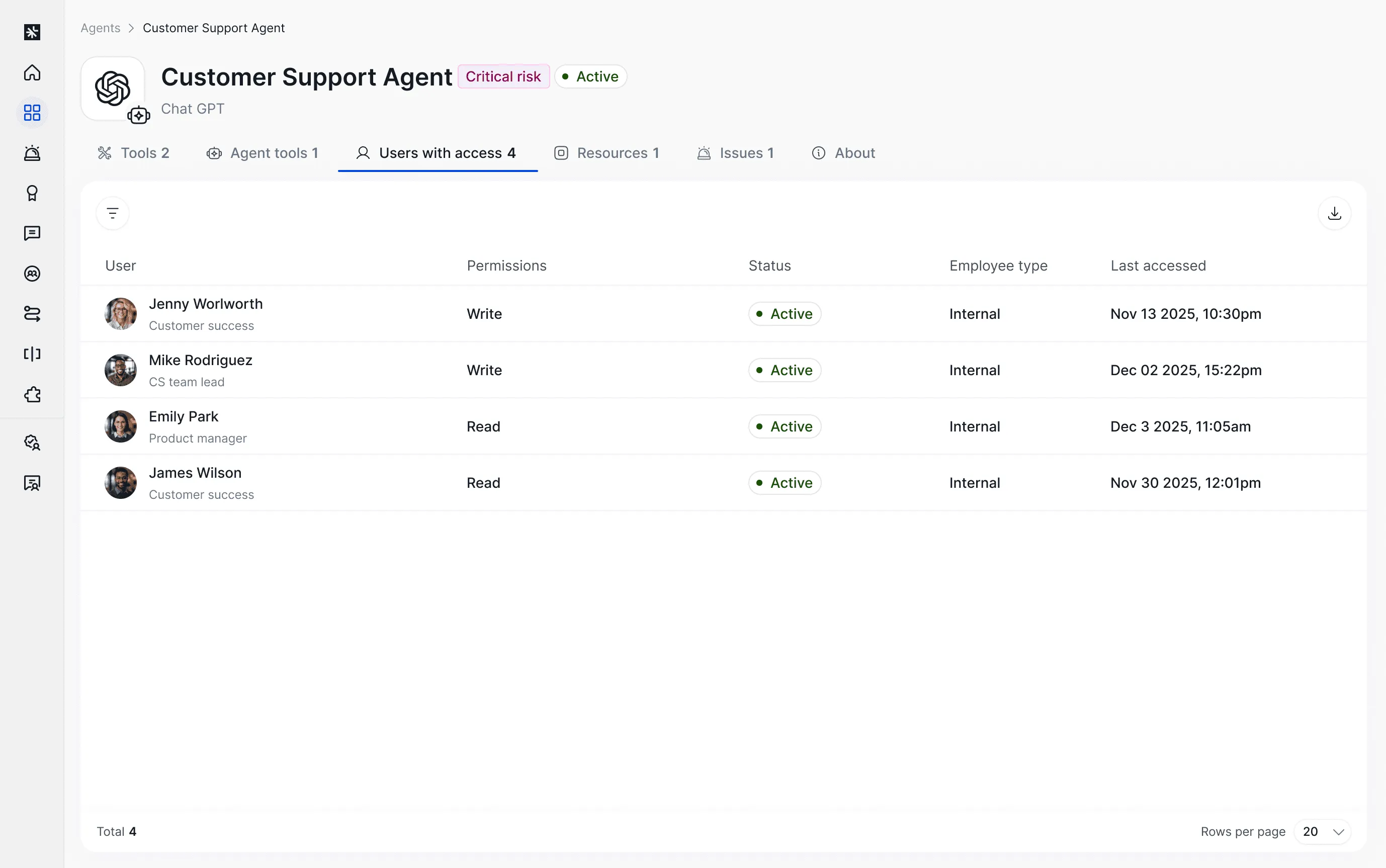

Along with your human and non-human identities, you can discover which agents are in use, who has access to them (humans or other agents), and what those agents have access to.

Who is allowed to run an agent vs what the agent can reach

Agents often carry permanent access through long-lived secrets and pre-wired tool connections. Linx helps you see mismatches between who can execute or influence an agent and what the agent’s configured credentials allow it to do. This makes implicit privilege visible so teams can remediate it.

Agent to app flow mapping across connected tools

Developers connect agents to many apps quickly, often through generic HTTP and API components or custom connectors. Linx correlates agent activity into an end-to-end flow from agent to application to resource. This helps teams understand blast radius, spot unexpected connections, and investigate multi-step behavior without stitching evidence together manually.

Which agents exist and who owns them?

Get an inventory of agents and quickly flag ones without clear owners.

Who can access the agent?

You can see which accounts have access to an agent, which helps you understand who can run it, manage it, or influence its behavior.

What does the agent have access to?

You can review the permissions and resources the agent can reach, including signals that help you prioritize what to look at first, and how the agent got the access in the first place (API token, service account, OAuth, etc.).

Bring agentic identities into your existing governance program

Most IAM teams already have a set of routines that work: access reviews, ownership assignment, least privilege, and remediation. Linx incorporates agentic identities into the same platform you use to secure and govern human and non-human identities across SaaS, cloud, and on-prem applications. That keeps visibility and governance consistent, even as identity types expand.

TL;DR

- AI agents are showing up across teams and tools, but most IAM programs don’t have a clear understanding of which agents are in use, and who uses them.

- Linx offers agentic identity discovery and governance so you can inventory agents, understand ownership, and review what they can access.

A new type of identity

AI agents are becoming part of day-to-day work. Teams use them to automate workflows, write and run code, move data between systems, and perform tasks that previously required a person to click through multiple tools.

Like most new technologies, the first challenges from a security and governance perspective are visibility and understanding. If you can’t see which agents exist, who owns them, who can use them, and what access they have, you can’t make informed decisions about risk.

Linx provides Agentic Identity Discovery and Governance to close that gap, and give teams a single platform to security and govern human, non-human, and agentic identities.

Agent discovery with Linx: visibility with context

Along with your human and non-human identities, you can discover which agents are in use, who has access to them (humans or other agents), and what those agents have access to.

Which agents exist and who owns them?

Get an inventory of agents and quickly flag ones without clear owners.

Who can access the agent?

You can see which accounts have access to an agent, which helps you understand who can run it, manage it, or influence its behavior.

What does the agent have access to?

You can review the permissions and resources the agent can reach, including signals that help you prioritize what to look at first.

Bring agentic identities into your existing governance program

Most IAM teams already have a set of routines that work: access reviews, ownership assignment, least privilege, and remediation. Linx incorporates agentic identities into the same platform you use to secure and govern human and non-human identities across SaaS, cloud, and on-prem applications. That keeps visibility and governance consistent, even as identity types expand.

Get started

If you’re already using Linx, start by opening the “agents” tab under “discovery”. If you want to see Agentic Identity Discovery and Governance in action, reach out to your Linx team for a walkthrough and best-practice guidance on how to operationalize it in your environment.

In modern IT ecosystems, identity security has become a cornerstone of organizational resilience. As enterprises adopt increasingly complex digital infrastructures, managing and safeguarding identity and access relationships is critical to preventing unauthorized access, mitigating insider threats, and ensuring regulatory compliance. However, deciphering the intricate web of identity and access relationships often necessitates advanced technical proficiency, thereby inhibiting security and IT teams from efficiently extracting crucial insights. The process of querying complex identity data should be streamlined and accessible, even for those who lack expertise in query syntax and technical acumen.

The Complexity of Identity and Access Relationships

The domain of Identity and Access Management (IAM) has undergone substantial evolution. Enterprises today administer vast identity landscapes comprising thousands to millions of entities, each equipped with multifaceted access entitlements spanning applications, cloud infrastructures, and enterprise systems. Comprehending access structures and their security implications is challenging.

Organizations must routinely address critical security inquiries, including:

- Which users have excessive access privileges beyond their job requirements?

- Are there dormant or inactive accounts with high-level access?

- How do cross-system permissions impact compliance and risk mitigation?

Such inquiries necessitate a profound examination of identity relationships that are frequently distributed across disparate repositories. Traditional relational databases, constrained by their rigid schema structures, struggle to model these highly dynamic and interdependent relationships, necessitating the adoption of more suitable paradigms—namely, graph databases.

The Power of Graph Databases in Modeling Complex Relationships

Graph databases are inherently designed to represent and interrogate complex relationships with a high degree of efficiency. Unlike relational databases that encapsulate data within fixed tabular formats, graph databases structure information as nodes (entities such as users, roles, or resources) and edges (the relationships connecting these entities).

From an identity security perspective, graph databases facilitate:

- A holistic visualization of access interdependencies

- The identification of implicit and inherited permissions

- Optimized querying for detecting security vulnerabilities

For instance, a graph database can expeditiously ascertain whether an individual has indirect administrative privileges to a mission-critical system through nested group memberships. While this architectural model offers significant advantages, it also introduces an operational challenge: querying graph databases necessitates specialized expertise.

The Complexity of Querying a Graph Database

Despite their superior capability in modeling identity security, graph databases impose a steep learning curve. Extracting meaningful insights demands proficiency in sophisticated query languages such as Cypher, Gremlin, or SPARQL. Mastering these languages entails:

- A deep understanding of graph traversal algorithms

- Competency in complex query syntactical constructs

- The ability to debug and optimize intricate queries for performance

For example, in Cypher, a query to find all users with privileged access might look like this:

MATCH (u:User)-[:HAS_ACCESS]->(r:Resource)

WHERE r.sensitivity = 'High'

RETURN u.name, r.name;This query retrieves all users who have direct access to high-sensitivity resources. While powerful, mastering such syntax requires specialized expertise. Security analysts, IAM administrators, and compliance teams frequently lack the requisite knowledge or bandwidth to develop fluency in such specialized query languages. As a result, they remain dependent on data engineering teams, impeding the agility required for proactive identity security management. Security analysts, IAM administrators, and compliance teams frequently lack the requisite knowledge or bandwidth to develop fluency in such specialized query languages. As a result, they remain dependent on data engineering teams, impeding the agility required for proactive identity security management.

Removing the Complexity Barriers with Linx AI-Assistant

With AI and natural language processing (NLP) making huge strides, querying graph databases no longer requires deep technical skills. Linx AI-assistant allows users to ask security-related questions in plain English and get instant, actionable insights.

Instead of formulating a complex query to enumerate all users with privileged access, one can simply ask: "Show me all dormant admin accounts in Okta that don't have MFA"

Instead of contending with Gremlin to analyze inherited permissions, a user can query: "Show me all users that have administrative permission in Snowflake"

By eliminating the need for complicated query syntax, Linx makes every user an expert. Security teams can boost efficiency, strengthen their identity security practices, and quickly get the answers they need.

See it in action

Don't let complex query languages slow you down! With natural language querying, you can get instant answers to your most pressing identity security questions—no technical expertise required. Whether you're tracking down overprivileged accounts or identifying risky access patterns, it's never been easier to take control of your identity data.

Try it now and experience how intuitive querying can enhance security, streamline decision-making, and empower your team. Start your journey toward simplified identity security today!

The Rise of AI Agents in the Workforce: A New Era for Identity and Access Management

As we enter 2025, the integration of AI agents into the workforce is no longer a distant possibility—it’s imminent. Sam Altman’s recent prediction that AI agents will begin materially contributing to the workforce this year is both exciting and challenging. These autonomous systems are poised to revolutionize productivity, enabling organizations to scale operations and tackle complex problems like never before. But with this promise comes an urgent need to rethink security, governance, and Identity and Access Management (IAM).

The rise of AI agents marks a transformative shift for IAM, which must now extend beyond managing human identities to include these intelligent digital workers. The implications for security and identity governance are profound. Here’s why, and how, businesses must prepare—and how Linx Security is uniquely positioned to help.

AI Agents and the Expanding Attack Surface

AI agents don’t just access data; they generate, process, and act on it autonomously. They can collaborate with human employees, make decisions, and even execute tasks across a company’s systems. This level of autonomy introduces a new layer of complexity and risk to IAM.

Understanding the Risks

AI agents introduce several new risks that organizations must address proactively:

- Compromised Agents: If an AI agent is compromised, it could lead to unauthorized data access, fraudulent actions, or even complete operational shutdowns. These agents, often granted significant access to sensitive systems, become high-value targets for attackers.

- Unmanaged Access: Without proper identity controls, AI agents may unintentionally overreach their access permissions, exposing sensitive systems or data. For instance, an AI agent might mistakenly escalate privileges or access systems it was not intended to.

- Insider Threats: AI agents programmed with malicious intent or manipulated by insiders can carry out harmful activities with efficiency and scale, compounding risks traditionally associated with human employees.

The Solution

Organizations must secure the identities of AI agents with the same rigor as human users. This involves:

- Adaptive Access Policies: Implementing dynamic, context-aware policies that scale based on the agent’s role and activities.

- Behavioral Analytics: Monitoring AI agents for anomalies in behavior, such as unusual access patterns or unexpected actions.

- Zero-Trust Architectures: Enforcing a zero-trust model that requires verification for every access request, regardless of the agent’s perceived trust level.

The Role of Governance in the AI-Driven Workforce

The integration of AI agents calls for robust governance frameworks to ensure accountability and compliance.

Key Governance Challenges

Governance for AI agents extends beyond traditional frameworks, requiring:

- AI Accountability: Who is responsible for an AI agent’s decisions and actions? Clear ownership and accountability are essential. Companies need to define who oversees AI agents’ activities and how these agents are supervised.

- Auditing AI Activities: Every action taken by an AI agent must be logged, traceable, and auditable. This ensures compliance with regulatory standards and provides a clear trail for forensic analysis in case of incidents.

- Regulatory Compliance: As AI agents gain autonomy, they fall under various compliance frameworks, such as GDPR, HIPAA, and SOX. Ensuring AI agents adhere to these regulations is critical to avoid legal and financial repercussions.

How IAM Supports Governance

A modern IAM solution provides the tools to monitor, log, and audit AI agent activities in real time, ensuring transparency and compliance. Linx Security’s platform, for example, integrates advanced auditing capabilities, offering unparalleled visibility into both human and AI identities. Additionally, Linx’s automated reporting features help organizations stay compliant with evolving regulations.

Security Challenges in Managing AI Agents

AI agents often require elevated permissions to perform their tasks. Managing these permissions is critical to minimizing risk.

Key Security Considerations

- Credentialing AI Agents: Traditional credentials like API keys and static passwords are insufficient. AI agents require dynamic, context-aware authentication mechanisms to ensure secure access.

- Privileged Access Management (PAM): Over-permissioning is a significant risk. Privileged access must be tightly controlled, with permissions granted on a just-in-time basis to minimize exposure.

- Segmentation and Isolation: Ensuring that AI agents operate within segmented networks and isolated environments reduces the blast radius of potential security breaches.

Linx Security’s Approach

Linx Security employs intelligent, risk-based policies to secure privileged access for AI agents while maintaining operational efficiency. This ensures that AI agents can perform their tasks without compromising security. Additionally, Linx’s real-time monitoring tools detect and respond to anomalies, providing an additional layer of defense.

A Tech-Forward Approach to AI IAM

The integration of AI agents demands a forward-thinking IAM strategy. Traditional IAM systems are ill-equipped to handle the scale, complexity, and autonomy of AI agents. Businesses need solutions designed for this new paradigm.

What’s Needed

To effectively manage AI agents, organizations require IAM systems with:

- Adaptive Identity Frameworks: Capable of scaling with AI agent deployments, these frameworks dynamically adjust permissions and access based on the agent’s current context and behavior.

- Real-Time Monitoring and Response: Continuous oversight ensures anomalies are detected and mitigated before they can escalate into incidents.

- Integration with AI Governance Tools: Seamless integration with AI-specific governance platforms enables unified management and oversight.

How Linx Leads

Linx Security’s platform is built to meet these challenges head-on. By combining policy automation, intelligent workflows, and advanced monitoring, Linx enables organizations to securely and confidently manage both human and AI identities. Additionally, Linx’s integration capabilities allow for seamless adoption of AI governance tools, ensuring holistic oversight.

Preparing for 2025 and Beyond

The introduction of AI agents into the workforce is a watershed moment for businesses. To harness their potential while mitigating risks, organizations must act now.

Action Steps for Businesses

- Conduct a Readiness Assessment: Evaluate whether your current IAM policies can accommodate AI agents. Identify gaps and prioritize areas for improvement.

- Transition to Dynamic Identity Frameworks: Move beyond static access models to adaptive, real-time systems capable of handling AI-specific complexities.

- Collaborate Across Teams: Ensure cybersecurity, IT, and business units work together to define AI governance policies and align on IAM strategies.

- Invest in Advanced IAM Solutions: Adopt IAM platforms, like Linx Security, that are designed to address the challenges posed by AI agents.

Conclusion: Securing the AI-Driven Future

The rise of AI agents represents an unparalleled opportunity for businesses to innovate and scale. But without a robust IAM framework, the risks could overshadow the benefits. As companies prepare for this new era, they must prioritize security, governance, and scalability in their IAM strategies.

At Linx Security, we’re not just keeping up with this future—we’re defining it. Our cutting-edge IAM solutions are built to address the complexities of managing both human and AI identities, ensuring your organization is ready for the AI revolution.

Are you prepared for the AI workforce? Learn how Linx Security can help you build the IAM framework you need to succeed. Schedule a demo today.

Sign up to get new articles & updates from the Linx team sent straight to you.